-

One of the great things about making your own website is that you can build new things. One of the worst things about making your own website is that you keep building new things. After web mentions and a way to publish easily, the next thing on my list was a blogroll. Not just a page people can go to, but a way to surface the things I like to everyone who happens to visit my website.

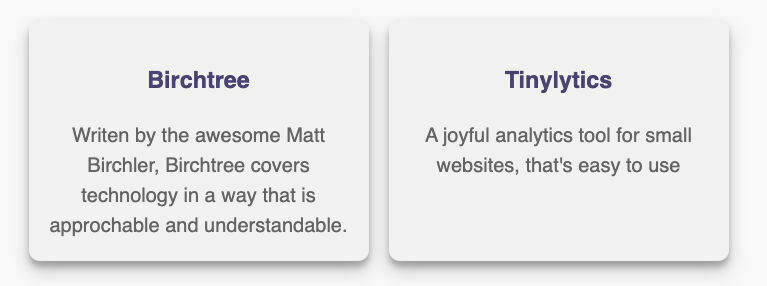

Thankfully, this was easy, and I took inspiration from Chris Hannah’s blogroll on GitHub. Starting with creating two JSON files with information on the blogs I enjoy reading, and the products I like and would recommend to people.

After creating the files, I added an Eleventy filter to generate a random number in order to pick a blog and product at random for each place this would appear. It was important to me that it cycle through as many as possible, but it was not browser-intensive, and my blog continued to serve static pages.

eleventyConfig.addFilter("randomItem", (arr) => { arr.sort(() => { return 0.5 - Math.random(); }); return arr.slice(0, 1); }); return { dir: { input: "src", output: "public", }, };Then I can show them wherever I wish using

{% raw %}{% for blog in blogroll | randomItem %}{% endraw %}. The best place for this is a partial, and at the moment it lies at the end of each blog post. However, I have plans to improve the design and be able to place it in more spaces.I have a few things in the JSON files at the moment, but I have a feeling this will grow quickly

-

In keeping with my ways, as soon as I published my rather long-winded method of pulling in pseudo webmentions, I knew I wasn’t satisfied. Functioning is one thing, but seeing all the code on a page and having trouble following it myself gave me a headache. I began working on updates I had planned for a few weeks later.

I am now just using the Mastodon API to pull in all the information I want to display.

async function fetchAndUpdateUserPosts(instanceURL, accessToken, username) { try { const fetchedPosts = await fetchUserPosts(instanceURL, accessToken, username); const filePath = `./_cache/mastodon_posts.json`; // Check if the file exists if (fs.existsSync(filePath)) { // Read existing posts from file const existingPosts = JSON.parse(fs.readFileSync(filePath, 'utf-8')); // Update or add new posts fetchedPosts.forEach(newPost => { const index = existingPosts.findIndex(oldPost => oldPost.id === newPost.id); if (index !== -1) { // Update existing post existingPosts[index] = newPost; } else { // Add new post existingPosts.push(newPost); } }); // Write updated posts back to the file fs.writeFileSync(filePath, JSON.stringify(existingPosts, null, 2)); console.log(`Posts for user ${username} fetched and updated successfully.`); } else { // Write fetched posts to a new file fs.writeFileSync(filePath, JSON.stringify(fetchedPosts, null, 2)); console.log(`Posts for user ${username} fetched and saved successfully.`); } } catch (error) { console.error('Error:', error); } }Full gist here.

For this, you will need an access token for the API, which is available in your settings - more info here. You will also need a username, which isn’t your actual username but more of an ID. More information on how to get this is available here.

The full script checks for changes to the cached file and only updates changes, which is handy for adding new comments, likes, or when you edit your post.

Displaying Information

This method makes displaying the information much easier, as the old way was a complete mess. It illustrates the learning process here!

Now I just need

{% raw %}{% set mastoContent = mastodon | searchContentForUrl(page.url | url | absoluteUrl(metadata.url)) %}{% endraw %}so in my post I can then display the toot content, likes, boosts and a comment count. For instance my toot data is:{% raw %} <div><i class="fa-regular fa-star"></i> {{ mastoContent.favourites_count}}</div> <div><i class="fa-regular fa-comment"></i> {{ mastoContent.replies_count}}</div> <div><i class="fa-solid fa-rocket"></i> {{ mastoContent.reblogs_count}}</div> {% endraw %}Future Plans

This new approach, although much cleaner, disregards webmentions from any source other than Mastodon. I am still pulling these in but displaying them on a private dashboard in my CMS. My plans are to filter out Mastodon-related ones and display these on the post page as well. This will take me a while to figure out the best way to show them, and at this point, I will pull in all old data from my old blog.

-

I’ve been struggling to write about much else besides writing. Sure, it’s occupying much of my time right now, but it isn’t a subject I typically cover. This isn’t a ‘worry’, but a post I read earlier highlighted factors that might be affecting my slump.

A post on the Meadow blog, about reading lots of blogs to be able to write widely, states:

There are no real guidelines on how to create posts. There are no expectations you need to fulfill, no boxes you need to check. There’s nothing you have to do besides doing whatever you want.

This simple statement is easy to forget. I still worry about the way I format my blog posts and what to write about, but ultimately it doesn’t matter. These meta posts are fine, because anything is fine. There’s no pressure to have a ‘thing’, and people seem to read them anyway. So, insert shrug emoji?

I’m 100% on board with the comments about the need to read a lot to write more. My usual inspiration for posts comes from the things I consume. Occasionally podcasts and TV shows, but nothing seems to prod my inspiration as much as reading books and blog posts. Something that generally falls away once I become busy.

By returning to the things I enjoy, it sparks the creativity to do other things I enjoy, and everything becomes right with the world again.

-

This week I have been building parts of my blog, to learn and to get it to a point that I am happy with. A large part of this was webmentions, and thanks to a few excellent guides I got 90% of the the way their. With only a few design things to think through I read more posts on webmentions and realised the way I was doing it was the best solution for privacy and so here we are with a reduced version.

I need to preface this with the fact I am a noob, and this might be a) wrong or b) a stupid way of doing it. This is just the way I did it, and if you’ve got some advice I am all ears.

Webmentions

I had already implemented a script to pull these from webmention.io and cache these into a local file.

// Import required modules const fetch = require('node-fetch'); const fs = require('fs'); const path = require('path'); require('dotenv').config(); // Constants const CACHE_DIR = './_cache'; const API_ORIGIN = 'https://webmention.io/api/mentions.jf2'; const TOKEN = process.env.WEBMENTION_IO_TOKEN; // Ensure cache directory exists if (!fs.existsSync(CACHE_DIR)) { fs.mkdirSync(CACHE_DIR); } // Function to fetch data from the API async function fetchData() { try { const response = await fetch(`${API_ORIGIN}?token=${TOKEN}`); if (!response.ok) { throw new Error(`Failed to fetch data: ${response.status} ${response.statusText}`); } const data = await response.json(); return data; } catch (error) { console.error('Error fetching data:', error.message); throw error; } } // Function to save data to JSON file function saveDataToFile(data) { try { const filePath = path.join(CACHE_DIR, 'webmentions.json'); fs.writeFileSync(filePath, JSON.stringify(data, null, 2)); console.log('Data saved to:', filePath); } catch (error) { console.error('Error saving data to file:', error.message); throw error; } } // Main function to fetch data and save it to file async function main() { try { const data = await fetchData(); saveDataToFile(data); } catch (error) { console.error('An error occurred:', error.message); process.exit(1); } } // Run main function main();Instead of loading all of the information from this as most guides do, I decided instead to simply count the important details. I think this could be cleaner but its working as thats all I am working on at the moment.

{% raw %} const filteredWebmentions = data.children.filter(entry => { return entry['like-of'] === url || entry['in-reply-to'] === url || entry['repost-of'] === url; }); // Initialize counts for each type let likeCount = 0; let replyCount = 0; let repostCount = 0; // Loop through each entry in the filtered webmentions filteredWebmentions.forEach(entry => { // Check if the entry matches the given URL for like, reply, or repost if (entry['like-of'] === url) { likeCount++; } if (entry['in-reply-to'] === url) { replyCount++; } if (entry['repost-of'] === url) { repostCount++; } }); // Return an object containing the counts return { likeCount, replyCount, repostCount }; {% endraw %}This is then displayed on my post using:

{% raw %} {% set urlnohtml = page.url | url | absoluteUrl(metadata.url) | replace('.html', '') %} {% set urlhtml = page.url | url | absoluteUrl(metadata.url) %} {% set countnohtml = webmentionsFilePath | countWebmentions(urlnohtml) %} {% set counthtml = webmentionsFilePath | countWebmentions(urlhtml) %} {% set totallikes = countnohtml.likeCount + counthtml.likeCount %} {% set totalreplies = countnohtml.replyCount + counthtml.replyCount %} {% set totalrepost = countnohtml.repostCount + counthtml.repostCount %} {% endraw %}This is not very effect as I ran into an issue where i had shared some links with

.htmlon the end and some without, so got an MVP working late last night.

Mastodon Information

I could then link people to the resulting Mastodon post but decided I wanted to go further and display the information from the toot.

The easy way would have been to rely on the embed code and link to the toot, but I decided instead to make as many things static as possible. Deciding instead to pull the rss feed from my account and filter it by the page url.

{% raw %} const axios = require('axios'); const Parser = require('rss-parser'); const fs = require('fs'); const rssFeedUrl = 'https://social.lol/@gr36.rss'; const jsonFilePath = './_cache/mastodon.json'; // Function to read the previously saved entries from the JSON file const readSavedEntries = () => { try { const jsonData = fs.readFileSync(jsonFilePath, 'utf8'); return JSON.parse(jsonData).map(entry => entry.link); } catch (error) { // If the file doesn't exist or is empty, return an empty array return []; } }; axios.get(rssFeedUrl) .then(response => { const parser = new Parser(); return parser.parseString(response.data); }) .then(feed => { const savedEntryUrls = readSavedEntries(); const newEntries = feed.items.filter(item => !savedEntryUrls.includes(item.link)); if (newEntries.length === 0) { console.log('No new entries found.'); return; } const items = newEntries.map(item => ({ link: item.link, pubDate: item.pubDate, content: item.content })); const jsonData = JSON.stringify([...items, ...readSavedEntries()], null, 2); fs.writeFile(jsonFilePath, jsonData, err => { if (err) { console.error('Error writing JSON file:', err); return; } console.log(`${newEntries.length} new entries saved to ${jsonFilePath}`); }); }) .catch(error => { console.error('Error fetching or parsing RSS feed:', error); }); {% endraw %}This is then parsed into json (I just think it looks better) and stored in a cache file.

I can then query this for specific page urls using

{% raw %} eleventyConfig.addFilter('searchContentForUrl', (mastodonData, currentPageUrl) => { // Load the JSON data const jsonData = JSON.parse(fs.readFileSync('./_cache/mastodon.json', 'utf8')); // Iterate over entries and search for the current URL in content property for (const entry of jsonData) { if (entry.content.includes(currentPageUrl)) { return entry; } } return null; // Return null if URL is not found in any content property }); {% endraw %}And display thing on the post page using

{% raw %}{% set mastoContent = mastodon | searchContentForUrl(urlnohtml) %}{% endraw %}I then show this with:

{% raw %} <a href="{{ mastoContent.link}}"><i class="fa-brands fa-mastodon"></i> Join the conversation on Mastodon <div>{{ mastoContent.content | safe }}</div></a> {% endraw %}Future Plans

Like I said right at the start, I am a complete noob with front end and I am currently studying to improve things. I’d like to pull all the information once using the mastodon API, so I have access to replies_count, reblogs_count and favourites_count but that can wait for now.

This only shows on a few posts due to a change in URL but I am planning to pull in old data and show this too. All in all, I’m fairly happy with it for now considering it was cobbled together fairly quickly when I changed my mind on what I wanted to display. Any helpful recommendation will be happily received.

-

For the last few weeks, I’ve fallen out with myself and the place I publish all of my things online. I don’t want to go into the specifics, but I turned back from the brink not that long ago and don’t want to again. As such, I’ve been learning to build my own static blog with 11ty. It’s slow-going, but that’s the point.

If I am honest with myself, hosting my blog and everything else with micro.blog is the easy way to do it, but making me find solutions for things forces me to readdress my desires. It is easy for me to point at things like micropub, webmentions and the like, but when I have to do work to implement them I have to be certain what I want to achieve.

Seems counterintuitive, doesn’t it? That was my initial reaction too, and there might be some conformation bias going on, but the more I thought about it, the more sense it makes. Out of the box, 11ty is powerful, but has a high barrier to entry. There are starter themes to clone and get you going, but to really take advantage of what it can do takes time and knowledge. Knowledge I barely have.

I managed to get a rudimentary CMS up and working, along with permalinks working the way I want to, but after a few hours of head scratching really in-depth features were lacking. Easy enough to just give up again, but these technical skill barriers are there to be solved. It made me think about how easy it is to yell for a feature on a platform, but when you have to build it yourself it’s a different story. The barrier prompts internal decisions that might never arrive.

It also made me laugh at some points where I have jumped into ‘new’ features without actually thinking about how they help and how useful they are. Ultimately, I’m willing to do the work to get the features I want, such as webmentions, but some are falling away. As I spoke about before, this is supplementing my current front end certificate, so it’s win/win at the moment.